Page 33 - FIGI - Big data, machine learning, consumer protection and privacy

P. 33

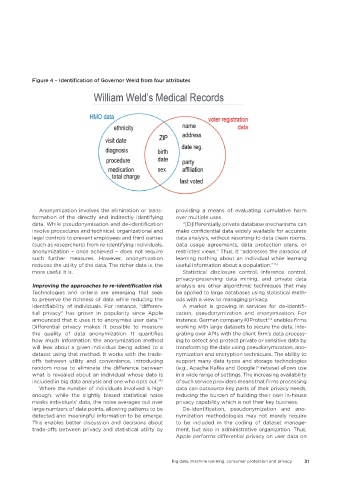

Figure 4 – Identification of Governor Weld from four attributes

Anonymization involves the elimination or trans- providing a means of evaluating cumulative harm

formation of the directly and indirectly identifying over multiple uses.

data. While pseudonymisation and de-identification “[D]ifferentially private database mechanisms can

involve procedures and technical, organizational and make confidential data widely available for accurate

legal controls to prevent employees and third parties data analysis, without resorting to data clean rooms,

(such as researchers) from re-identifying individuals, data usage agreements, data protection plans, or

anonymization – once achieved – does not require restricted views.” Thus, it “addresses the paradox of

such further measures. However, anonymization learning nothing about an individual while learning

reduces the utility of the data. The richer data is, the useful information about a population.”

153

more useful it is. Statistical disclosure control, inference control,

privacy-preserving data mining, and private data

Improving the approaches to re-identification risk analysis are other algorithmic techniques that may

Technologies and criteria are emerging that seek be applied to large databases using statistical meth-

to preserve the richness of data while reducing the ods with a view to managing privacy.

identifiability of individuals. For instance, “differen- A market is growing in services for de-identifi-

tial privacy” has grown in popularity since Apple cation, pseudonymization and anonymization. For

announced that it uses it to anonymise user data. instance, German company KIProtect enables firms

151

154

Differential privacy makes it possible to measure working with large datasets to secure the data, inte-

the quality of data anonymization. It quantifies grating over APIs with the client firm’s data process-

how much information the anonymization method ing to detect and protect private or sensitive data by

will leak about a given individual being added to a transforming the data using pseudonymization, ano-

dataset using that method. It works with the trade- nymization and encryption techniques. The ability to

offs between utility and convenience, introducing support many data types and storage technologies

random noise to eliminate the difference between (e.g., Apache Kafka and Google Firebase) allows use

what is revealed about an individual whose data is in a wide range of settings. The increasing availability

included in big data analysis and one who opts out. of such service providers means that firms processing

152

Where the number of individuals involved is high data can outsource key parts of their privacy needs,

enough, while the slightly biased statistical noise reducing the burden of building their own in-house

masks individuals’ data, the noise averages out over privacy capability which is not their key business.

large numbers of data points, allowing patterns to be De-identification, pseudonymization and ano-

detected and meaningful information to be emerge. nymization methodologies may not merely require

This enables better discussion and decisions about to be included in the coding of dataset manage-

trade-offs between privacy and statistical utility by ment, but also in administrative organization. Thus,

Apple performs differential privacy on user data on

Big data, machine learning, consumer protection and privacy 31